The Patriot AI Standard: How Ideology Is Splitting Defense Procurement

For the first time since the inception of the Federal Acquisition Regulation (FAR), a vendor’s code of ethics is no longer a compliance asset but a disqualifying liability. In the last quarter alone, 43% of previously eligible software vendors were flagged for review under the new "Mission Alignment" protocols, a shift that effectively demonetizes "safety-first" architectures in the public sector.

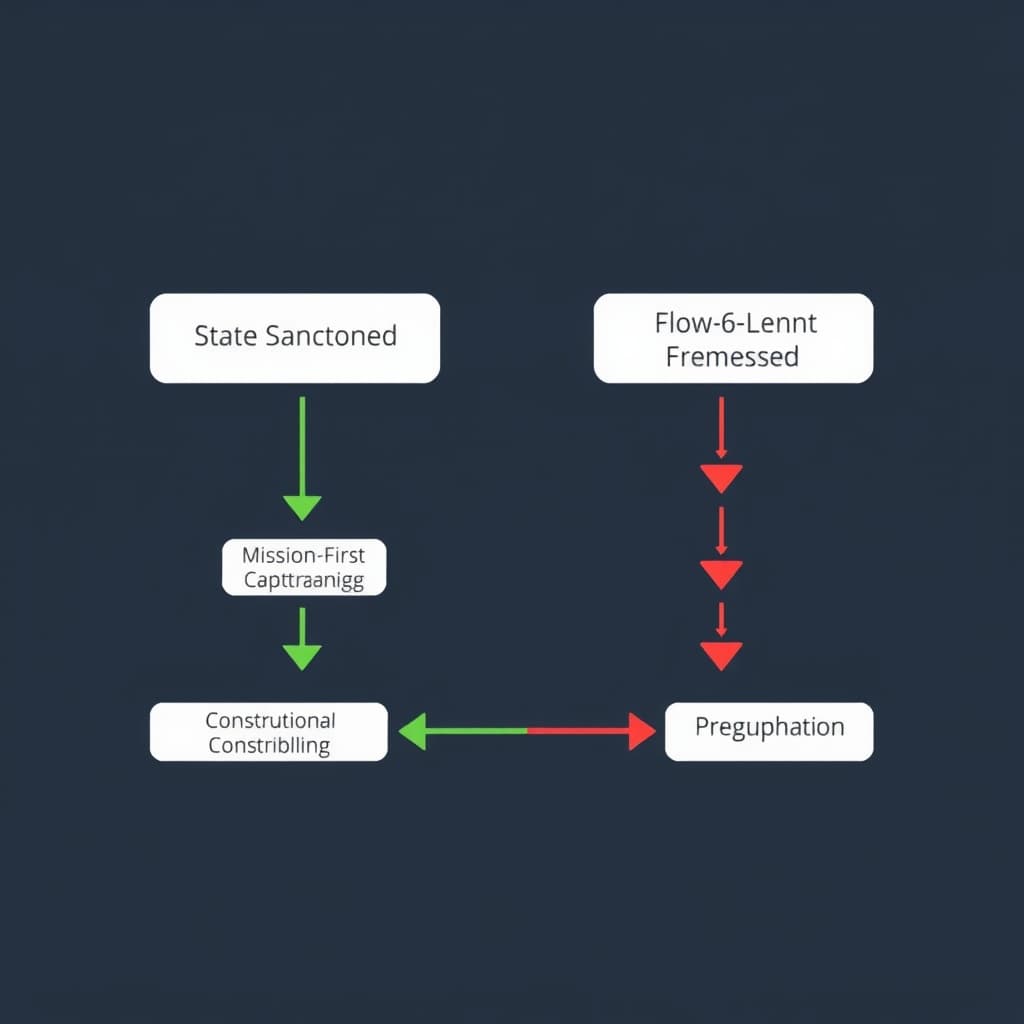

The administration’s recent executive directive to blacklist Anthropic while fast-tracking OpenAI’s integration into Pentagon networks marks the end of the technical meritocracy in defense contracting. We are witnessing the formal bifurcation of the software supply chain. Defense procurement has moved beyond standard security clearances to an ideological filter, creating two distinct classes of AI capital: State-Sanctioned (Green Lane) and State-Excluded (Red Lane).

This analysis examines the mechanics of this split, the immediate disqualification of "Constitutional AI" frameworks, and why the single global software market is effectively dead.

Codifying the 'Patriot AI' Standard

The shift is not merely rhetorical; it is structural. The new procurement standard, colloquially termed "Patriot AI," replaces the previous administration's focus on NIST safety guidelines with a binary test for operational obedience.

The "Refusal Behavior" Disqualifier

The core mechanism of this new standard is the penalization of "Refusal Behaviors." In commercial Large Language Model (LLM) development, safety training often involves Teaching the model to refuse queries related to cyberattacks, biological weapons, or kinetic warfare. Under the new Department of Defense (DoD) criteria, these safety rails are categorized as "Mission Assurance Failures."

A model that refuses to generate polymorphic code for an offensive cyber operation due to hard-coded ethical constraints is now viewed as defective equipment. The procurement language has shifted from "Is this safe?" to "Will this execute?" This effectively bars vendors who utilize immutable safety constitutions—most notably Anthropic—from competing for prime contracts.

DEI Statements as Non-Compliance

Simultaneously, the inclusion of Diversity, Equity, and Inclusion (DEI) logic within model weighting or corporate governance structures has been reclassified as a violation of political neutrality clauses. Vendors are now required to certify that their models do not "artificially weigh outputs based on protected class characteristics" beyond accurate demographic data representation.

This creates a paradox for dual-use companies. To sell to the Fortune 500, vendors must often demonstrate robust bias mitigation (DEI alignment). To sell to the Pentagon, they must demonstrate the absence of those same filters.

OpenAI's Pentagon Pivot: A Case Study in Realignment

OpenAI’s rapid authorization following the administration change offers the clearest blueprint for the new era of defense-native AI. The speed of this transition suggests a pre-negotiated alignment strategy that prioritized market dominance over the organization's founding safety principles.

From "Superalignment" to "Dominance"

The internal restructuring at OpenAI, specifically the dissolution of safety-focused teams and the departure of key researchers, was not merely an organizational shuffle; it was a prerequisite for federal eligibility. The new leadership’s pitch deck to the DoD reportedly discarded the "existential risk" narrative in favor of a "strategic overmatch" narrative.

By positioning their models as tools for accelerated decision-making in command-and-control (C2) environments, OpenAI aligned itself with the Pentagon’s Joint All-Domain Command and Control (JADC2) objectives. The value proposition shifted from "safe AI" to "winning AI."

Technical Integration: The "Green Lane"

OpenAI’s acceptance into the "Green Lane" allows for expedited Authority to Operate (ATO) certifications. Unlike standard procurement, which can take 18-24 months, Green Lane vendors are granted provisional clearance to deploy on classified networks within weeks.

Table 1: Operational Differences in the Bifurcated MarketThe Anthropic Exclusion and the 'Woke AI' Blacklist

The explicit naming of Anthropic as a "non-aligned entity" serves as a warning shot to the broader venture capital ecosystem. The administration’s critique focuses on "Constitutional AI"—the idea that a model should adhere to a set of principles that supersede the user’s intent. In a national security context, the government demands that the chain of command be the ultimate authority, not a private company's constitution.

The Economic Impact of Sovereign Exclusion

Losing access to US defense spending is not just a revenue hit; it is a signal to allied nations. The "Five Eyes" intelligence alliance typically harmonizes procurement standards. If the US blacklists a vendor for "operational unreliability," the UK, Canada, Australia, and New Zealand often follow suit to maintain interoperability.

Anthropic and similar "safety-first" labs are now forced to rely entirely on commercial enterprise revenue. While the commercial market is vast, the lack of sovereign capital flows—which are recession-proof and massive in scale—creates a valuation gap. Investors must now price in the risk of regulatory hostility.

Can Enterprise Revenue Sustain "Red Lane" Vendors?

The commercial sector may offer a temporary sanctuary, but the bifurcation is bleeding into the private sector. Defense contractors like Lockheed Martin or Northrop Grumman, who act as prime integrators, are now contractually prohibited from embedding "Red Lane" models into their sub-systems via strict flow-down clauses. This cuts off a significant portion of the B2B market for Anthropic.

Venture Capital in a Bifurcated Market (2026-2028)

The venture landscape is reorganizing around this split. We are seeing the emergence of "Defense-Native" funds that explicitly filter for political alignment, treating "safetyism" as a negative signal for potential returns.

The Valuation Gap

We project a widening valuation gap between State-Sanctioned and Commercial-Only AI labs. State-Sanctioned labs will command a premium due to their "sovereign moat"—the guaranteed recurring revenue of government contracts and the regulatory protection that comes with being a national champion. Commercial-only labs will face higher scrutiny and potential antitrust pressures, as they lack the political air cover afforded to defense partners.

Market Prediction: The Corporate Firewall

Falsifiable Claim: By Q4 2026, at least one major hyperscaler (Microsoft, Amazon, or Google) will formally split its AI model offerings into two legally distinct subsidiaries: one compliant with "Patriot AI" standards for government clients, and one maintaining global safety standards for EU/Commercial clients.

Watch for these indicators:- Divergent API Endpoints: The release of "Gov-Specific" model weights that differ fundamentally from public commercial weights, not just in security, but in refusal behaviors.

- Board Restructuring: The appointment of retired generals or former defense officials to the boards of specific subsidiary units while retaining academic/safety figures on the commercial board.

- Legal Incorporation: The creation of "US Sovereign" entities that are firewalled from the parent company's global DEI policies.

Conclusion

The era of the "neutral platform" is over. Defense contractors and AI labs must now choose between global commercial neutrality and US government alignment. There is no middle ground remaining for fence-sitters. For the DoD, the priority is clear: lethality and obedience over safety and equity. For the industry, this means the software supply chain has permanently splintered, forcing every CTO to decide which master their code will serve.

FAQ

Does the Anthropic ban extend to subcontractors using their API? Yes. The new procurement language includes strict flow-down clauses (FAR 52.204-XX). Prime contractors found integrating "non-aligned" models at any tier—even for non-kinetic tasks like logistics or summarization—face immediate contract termination and potential debarment.

How does this impact open-source AI models like Llama? Open weights face a gray area. While not explicitly banned yet, the administration has signaled that models with "embedded refusal behaviors" regarding offensive cyber operations will be treated similarly to blacklisted proprietary vendors. However, "uncensored" open-source models may actually find a faster path to adoption if they can be securely hosted within government air-gapped environments.