The Synthetic Proof-of-Life Crisis: Why Markets Require Cryptographic Verification

The Synthetic Proof-of-Life Crisis: Why Markets Require Cryptographic Verification

In March 2026, a seemingly routine video of Israeli Prime Minister Benjamin Netanyahu at a Jerusalem coffee shop—intended to dispel rumors of his death—was immediately flagged by open-source intelligence networks and AI models as a high-fidelity deepfake. This incident forced algorithmic trading desks to halt automated geopolitical sentiment parsing, exposing a critical vulnerability in how financial infrastructure processes breaking news. Evaluating this event through the framework of information theory and market micro-structure reveals a stark reality: high-fidelity generative AI has neutralized visual evidence. The resulting epistemological void directly threatens global market stability, dictating that legacy verification methods must immediately yield to cryptographic authentication frameworks.

The Epistemological Collapse of Digital Evidence

Deepfakes Outpacing Traditional Forensics

Historically, digital forensics relied on detecting pixel inconsistencies, unnatural lighting, or compression artifacts. Today, diffusion models and generative adversarial networks produce mathematically perfect visual noise profiles, rendering pixel-level analysis obsolete. The core constraint of traditional forensics is its reactive nature; it requires time and computational resources to analyze media after it has been disseminated. By the time a forensic consensus is reached, financial markets have already priced in the event, traded on the momentum, and suffered the resulting volatility.

The Netanyahu Incident as a Market Catalyst

The March 2026 Netanyahu video exemplifies this collapse. Following Iranian media claims of his demise, his office released footage of him at a cafe. Rather than calming markets, the video sparked intense scrutiny over unnatural coffee cup physics and facial distortions, leading AI models to declare it synthetic. For sovereign bond markets and currency exchanges, the inability to verify the physical status of a nuclear-armed state's leader within a standard trading window created a pricing vacuum. This event catalyzed a shift among institutional investors, who now discount unverified digital media entirely.

Quantifying the Financial Impact of Synthetic Uncertainty

Algorithmic Trading Reactions to Unverified Media

Quantitative funds deploy natural language processing and computer vision models to scrape social media for alpha. When these models ingest synthetic media, they trigger cascading algorithmic reactions. The 2023 AI-generated image of a Pentagon explosion temporarily erased $500 billion in market capitalization. By 2026, the sheer volume of synthetic media has forced trading firms to adjust their confidence intervals. Algorithms are now programmed to widen spreads or withdraw liquidity entirely when breaking geopolitical news lacks cryptographic signatures, leading to flash droughts in market liquidity.

The Cost of Delayed Geopolitical Intelligence

The second-order effect of this uncertainty is the expansion of risk premiums. When intelligence cannot be visually verified, supply chain managers hedge against worst-case scenarios, and energy markets price in conflict disruptions that may not actually exist. The time delta between the release of synthetic media and its definitive debunking represents a window of maximum financial extraction for malicious actors.

Map of Incentives To understand the friction in resolving this crisis, one must map the underlying incentives:

- Winners: State-sponsored actors and short-sellers who weaponize volatility via synthetic media generation; hardware manufacturers designing secure enclaves for the new verification economy.

- Losers: Traditional media syndicates losing their monopoly on verification; quantitative funds whose sentiment-scraping algorithms are poisoned by synthetic noise; governments that rely on the plausible deniability of unverified media.

Architecting Cryptographic Authentication Frameworks

Hardware-Level Provenance and Secure Enclaves

Software-based watermarking is insufficient because it occurs post-capture. True cryptographic proof-of-life requires hardware-level provenance. This mechanism relies on Trusted Execution Environments (TEEs) or secure enclaves embedded directly into camera image signal processors. At the exact moment light hits the sensor, the hardware generates a cryptographic hash of the raw data, appending a timestamp and GPS coordinates. This data is signed using a private key burned into the silicon, creating a tamper-evident chain of custody before the file ever reaches an operating system.

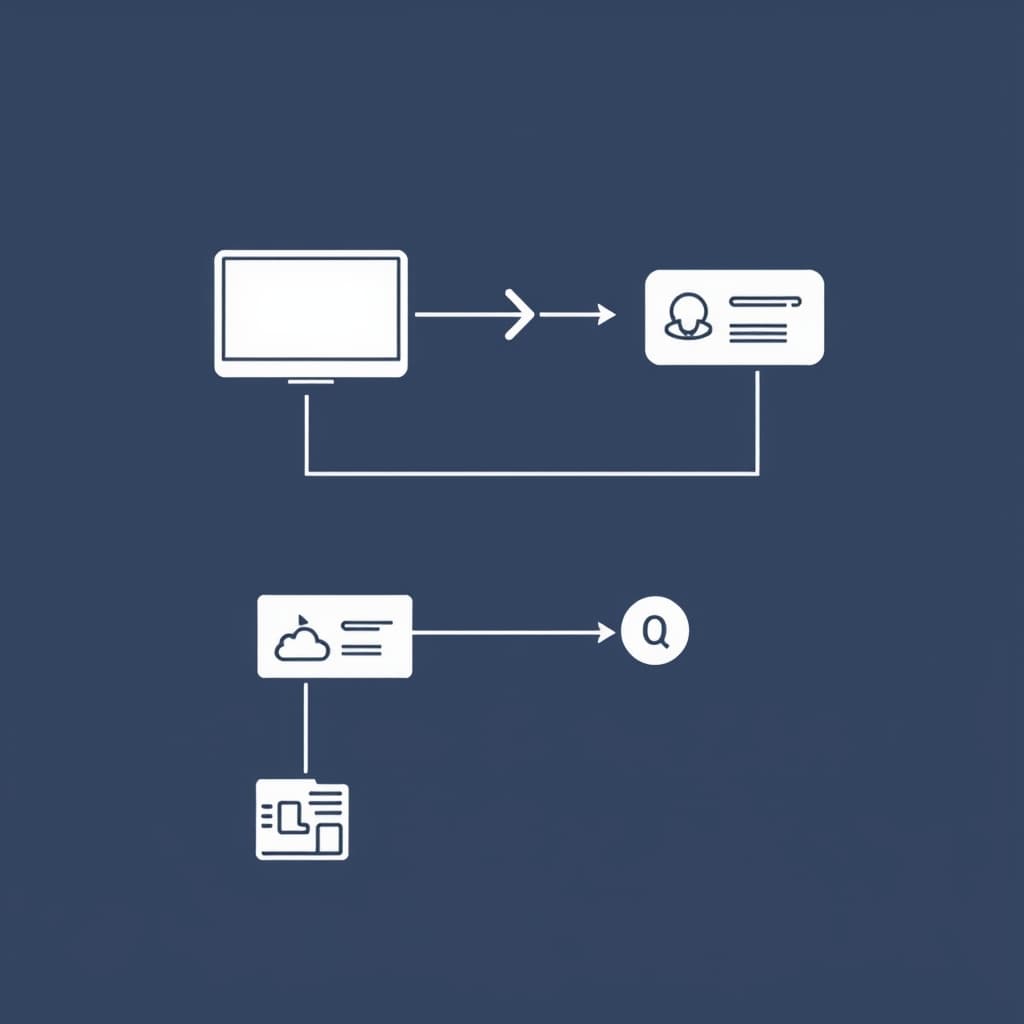

Decentralized Public Key Infrastructure for World Leaders

A hardware signature is only as reliable as the public key infrastructure (PKI) used to verify it. Centralized PKIs are vulnerable to state coercion; a rogue government could compel a certificate authority to validate synthetic media. To counteract this, verification must transition to a Decentralized Public Key Infrastructure (DPKI). In this model, the public keys of critical geopolitical figures are anchored to a distributed ledger, ensuring that no single entity can retroactively alter the registry to authenticate a deepfake.

Implementing Zero-Trust Media Protocols

C2PA Standards and Real-Time Watermarking

The Coalition for Content Provenance and Authenticity (C2PA) has established the foundational architecture for this transition. Version 2.2 of the C2PA specification defines how secure assertions—such as the device used, the creator, and any subsequent edits—are bound to the media file via a digitally signed manifest. If a single pixel is altered, the cryptographic hash breaks, immediately invalidating the content credential.

Overcoming Adoption Friction in Government Sectors

Despite the technical viability of C2PA, government adoption faces severe political friction. The primary constraint is the loss of strategic ambiguity. If a head of state normalizes the use of cryptographically signed proof-of-life videos, any future communication lacking that signature will be automatically presumed fake or recorded under duress. This binary state forces governments to maintain perfect operational security, a standard many intelligence agencies view as an unacceptable limitation on their psychological operations capabilities.

The Road to 2030: Mandatory Proof-of-Life Compliance

Regulatory Shifts in Financial Information Systems

Financial regulators are beginning to view unverified media as a systemic market risk. The U.S. Securities and Exchange Commission (SEC) has already escalated enforcement against "AI washing" and AI-driven market manipulation. By 2030, regulatory frameworks will likely mandate that financial data terminals filter unverified media from their institutional feeds. Publicly traded companies and sovereign entities will be required to submit cryptographically signed disclosures to prevent synthetic panic selling.

The Rise of Sovereign Identity Oracles

The integration of cryptographic media into financial markets will give rise to Sovereign Identity Oracles—specialized nodes that bridge hardware-signed media with smart contracts and trading algorithms. These oracles will parse C2PA manifests in real-time, executing trades only when a geopolitical event is verified by an unbroken chain of cryptographic custody. This infrastructure will fundamentally decouple market reactions from social media sentiment.

What would change my mind on this trajectory: If quantum computing breaks current elliptic curve cryptography faster than the National Institute of Standards and Technology (NIST) can standardize and deploy post-quantum algorithms, hardware-level signatures could be spoofed retroactively. This scenario would render current secure enclaves obsolete, completely neutralizing cryptographic provenance and forcing markets to rely on multi-party physical verification rather than digital cryptography.

Conclusion

Visual trust is obsolete. The transition to cryptographic truth is now a fundamental requirement for market stability, with hardware-level provenance poised to become the standard for geopolitical communications. As generative AI continues to erode the epistemological foundation of digital evidence, the financial system's survival depends entirely on adopting zero-trust media protocols.

FAQ

What exactly constitutes a cryptographic proof-of-life? It involves combining hardware-secured biometric capture with real-time digital signatures, ensuring the media was recorded by a specific individual at a precise time and location without subsequent alteration.

How do financial markets currently price in the risk of synthetic media? Markets are applying an uncertainty discount to breaking news involving key figures until secondary, non-digital verification is established, leading to brief but intense volatility spikes driven by algorithmic trading.

Sources

- Coalition for Content Provenance and Authenticity (C2PA) Technical Specification

- U.S. Securities and Exchange Commission: AI, Deepfakes, and the Future of Financial Deception

- NIST Cryptographic Standards and Guidelines

- Harter Secrest & Emery LLP: Navigating AI Risks and SEC Enforcement Trends

- The Regulatory Review: AI and the Future of Market Manipulation

- Aspen Policy Academy: NIST and Information Integrity Risks from Synthetic Text

Related

View all →

Petro-Chokepoint Equity Contagion: Why the Hormuz Blockade Threatens Broad Indices