Beyond Copilots: The Structural Shift to Native Agentic IDEs

When a mid-sized fintech in London attempted to refactor their legacy Objective-C payment gateway last month, they didn't hire a consultancy; they deployed an instance of Xcode 26.3 with permission to access the local file system and build tools. The result wasn't just code completion—it was a 40% reduction in technical debt with zero human keystrokes regarding syntax, achieved because the IDE didn't just suggest code, it compiled, tested, and reverted its own hallucinations until the build passed. This marks the end of the "Copilot" era and the beginning of Native Agentic IDEs, a shift that fundamentally alters the labor economics of software engineering.

The release of Xcode 26.3 is not a feature update; it is a hostile takeover of the development lifecycle by the environment itself. We are moving from a paradigm where AI suggests text to a paradigm where the IDE hosts autonomous agents capable of multi-step execution, direct memory access, and self-correction. This piece dissects why embedding OpenAI and Anthropic models directly into the core runtime—bypassing the latency of API wrappers—creates a new, arguably dangerous, layer of abstraction.

The Architecture of Autonomy: Deep Integration vs. API Wrappers

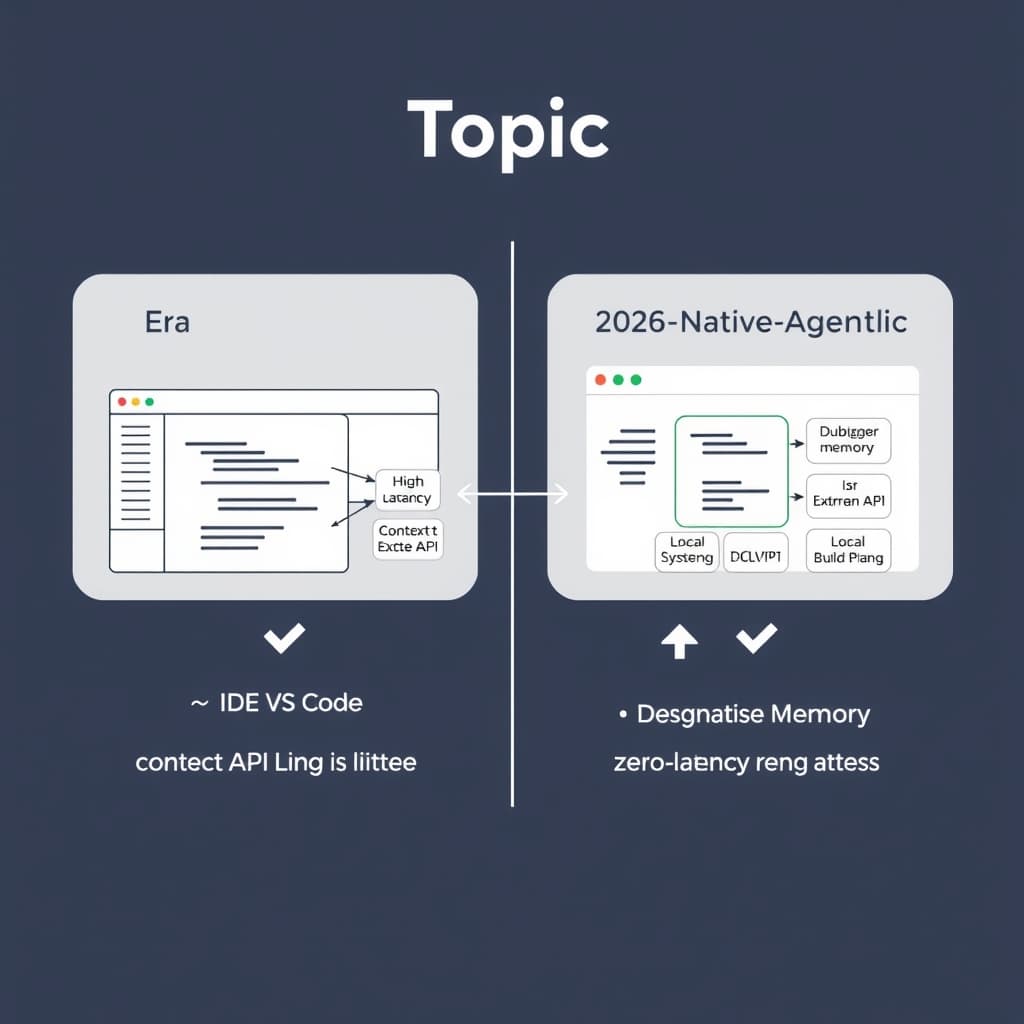

The previous generation of AI coding tools (2023–2025) suffered from a fatal architectural flaw: they were text-processing engines divorced from the compiler. Tools like the original GitHub Copilot treated code as natural language, predicting the next token based on statistical probability rather than semantic validity.

Native Agentic IDEs invert this. By integrating the model inference layer directly with the Language Server Protocol (LSP) and the Abstract Syntax Tree (AST), the agent no longer "guesses" variable names—it reads the symbol table.

Moving Beyond the Text Buffer

In the Xcode 26.3 implementation, the agent does not merely scrape the text buffer. It has read/write access to compiler flags and build artifacts. When a developer requests a refactor, the agent can:

- Analyze the AST to identify all references to a class, not just string matches.

- Attempt the refactor.

- Trigger a headless build.

- Parse the Clang error logs if the build fails.

- Iterate on the fix before presenting the result to the human.

This loop—Modify, Compile, Analyze, Repeat—was previously the domain of the human developer. By tightening this loop inside the IDE's local runtime, we eliminate the context-switching tax that plagued early LLM adoption.

Latency Reduction via On-Device Distillation

The economic viability of this model relies on hybrid inference. Sending the entire project state to a cloud endpoint for every minor logic check is cost-prohibitive and introduces unacceptable latency. The structural shift here is the use of quantized, on-device models (specifically optimized for Apple Silicon in this context) to handle the "OODA loop" (Observe, Orient, Decide, Act) of the build process, while only offloading complex reasoning tasks to the cloud. This allows the IDE to maintain a "thought process" that feels instantaneous to the user, masking the asynchronous calls to larger models.

From Code Completion to State Management

The most profound change is the shift in responsibility from writing syntax to managing state. In 2024, if you wanted to split a monolith into microservices, you used an LLM to generate the boilerplate, but you—the human—had to wire the dependencies, fix the imports, and ensure the configuration files matched.

Handling Multi-File Refactoring

Native agents treat the entire repository as a graph, not a collection of text files. Because the agent has access to the file system and version control, it can orchestrate changes across 50+ files simultaneously.

Consider the difference in workflow:

Supervising the Debugging Loop

We are transitioning from "prompting" to "supervising." In a Native Agentic environment, the debugger is no longer a passive tool for the human to inspect memory. It is a sensor for the agent. When an exception is thrown, the agent inspects the stack trace, correlates it with the source code, and proposes a patch. The developer’s role shifts to auditing the logic of the fix rather than the syntax of the implementation. This requires a higher order of thinking; you cannot blindly trust an agent that has write access to your production config.

The Vendor Lock-in Trap: When the IDE Becomes the Manager

While the productivity gains are undeniable, the strategic implications for enterprise architecture are concerning. Apple, Microsoft, and JetBrains are effectively building walled gardens where the "intelligence" is coupled tightly with the proprietary runtime.

The Runtime Control Layer

If the agent is responsible for configuring the build environment, the vendor controlling the agent controls the standards. In Xcode 26.3, the seamless integration of Swift 6 concurrency features is driven by agents that preferentially suggest and implement Apple-specific patterns. For cross-platform teams, this creates a divergence pressure. An agent optimized for Xcode will naturally drift codebases toward Apple's ecosystem, making it harder to maintain generic C++ or Rust cores that compile equally well on Linux or Windows.

Cost Analysis: Seats vs. Compute

We are witnessing the death of the flat per-seat license. The new economic model is consumption-based.

- Old Model: $19/month for access to the tool.

- New Model: Base fee + cost per "Agentic Build Cycle."

Enterprises must now calculate the ROI of an agent fixing a bug versus a junior developer fixing it. If an agent burns $4.00 in token costs to resolve a race condition that would take a human four hours ($200+), the math works. However, this makes development costs variable and opaque, tied directly to the efficiency of the vendor's model rather than the efficiency of the staff.

The 2030 Horizon: Self-Healing Repositories

Looking forward, the distinction between "writing code" and "maintaining software" will vanish. We are heading toward "maintenance-free" legacy codebases where background agents run continuous refactoring loops.

The Rise of Verification Layers

As agents generate more code, the security bottleneck moves to verification. We will see the emergence of "adversarial agentic testing"—where one AI system writes the code, and a separate, isolated AI system attempts to penetrate it. Security teams will no longer audit code; they will audit the parameters of the verification agents.

Falsifiable Claim

Prediction: By Q4 2028, over 30% of enterprise "commits" will be authored by agents and merged without a human ever viewing the raw diff, relying solely on high-level logic approval and passing verification suites.

Indicators to Watch:- Git Platform Metrics: A sharp rise in commits signed by "machine users" or specific bot IDs relative to human accounts.

- Job Market Shifts: A decline in "Junior Developer" listings paired with a rise in "Integration Specialist" or "AI Systems Architect" roles.

- Regulatory Frameworks: The introduction of ISO standards specifically for "AI-generated code liability," mandating human-in-the-loop for critical infrastructure (already hinted at by the EU AI Act).

Conclusion

Native Agentic IDEs are not merely a productivity boost; they define a new abstraction layer where the developer acts as the architect and the IDE functions as the general contractor. The release of Xcode 26.3 proves that the future is not in better chat interfaces, but in deeper runtime integration. As VS Code and JetBrains scramble to match this level of AST-aware autonomy, the ability to audit and manage these agents—rather than just prompt them—will become the defining skill of the next decade. The code is no longer the product; the process of generating the code is.

FAQ

How does a Native Agentic IDE differ from using GitHub Copilot? Copilot primarily functions as a predictive text engine or a chat interface effectively decoupled from the build system. A Native Agentic IDE grants the AI permissions to execute terminal commands, modify multiple files simultaneously, read the Abstract Syntax Tree (AST), and run debuggers autonomously without constant human approval for every micro-step.

What are the security risks of granting agents runtime access? The primary risk involves supply chain injection and "hallucinated dependencies." An agent might inadvertently import a compromised package to satisfy a build requirement. Furthermore, without strict sandboxing, an agent could theoretically exfiltrate local environment variables or keys during a "debugging" step. Enterprise adoption requires strict "human-in-the-loop" commit verification protocols.