WebAssembly-Native Python Execution: Redefining Browser-Based Application Architecture

WebAssembly-Native Python Execution: Redefining Browser-Based Application Architecture

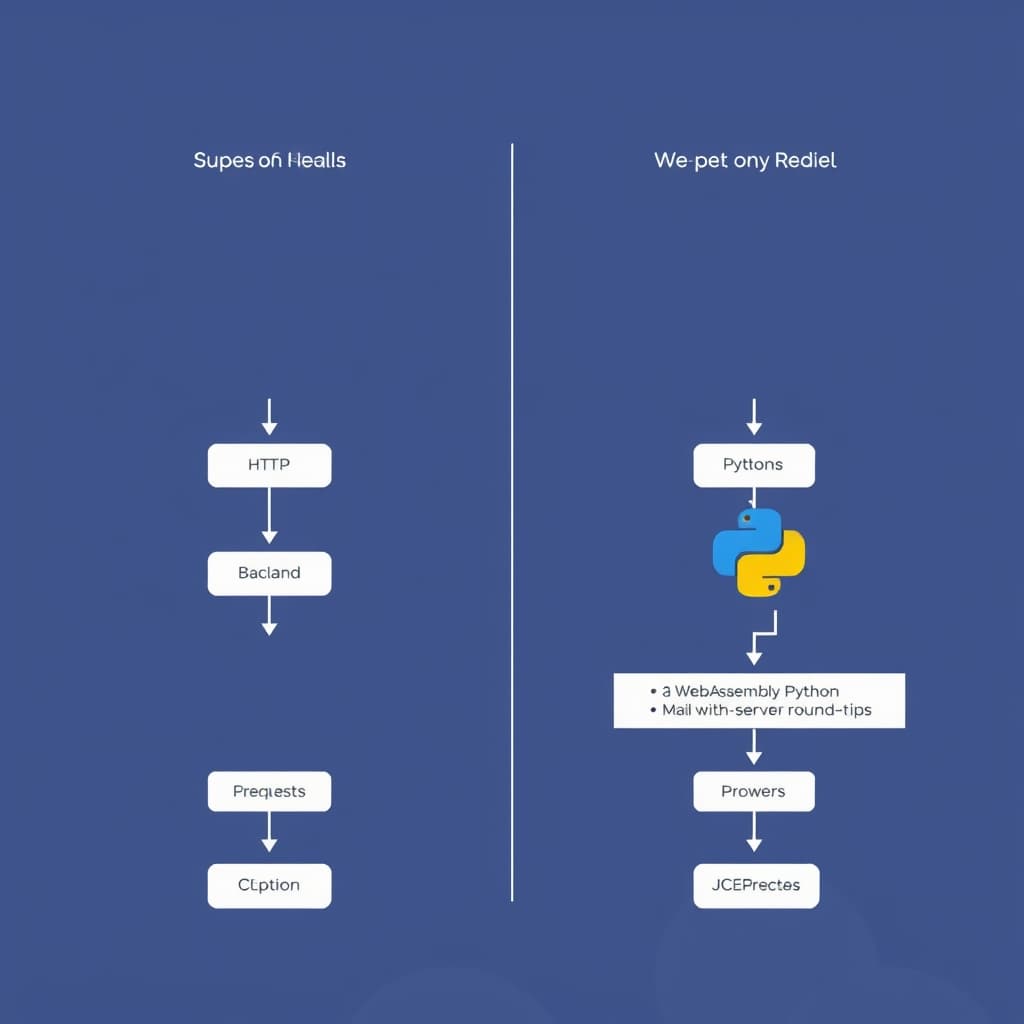

Enterprise architects currently face a costly architectural dilemma: continue scaling expensive, high-latency backend infrastructure to serve Python-based data applications, or risk migrating heavy compute payloads directly to the fragile client edge. For over a decade, Python has monopolized data science, machine learning, and scientific computing, yet it remained fundamentally locked out of the browser. That barrier has collapsed. The formalization of WebAssembly-Native Python Execution—anchored by standards like PEP 816 for WASI support and PEP 776 for Emscripten—forces a total re-evaluation of how we deploy computational workloads. I will dissect the mechanical realities of running CPython in WebAssembly (Wasm), evaluate the immediate economic trade-offs of shifting from server-side to client-side compute, and define the strategic roadmap for frontend Python through 2030.

Deconstructing PEP 816: The Mechanics of Python on Wasm

Compiler Toolchains and Emscripten Optimizations

Bringing CPython to the browser requires translating its C-based architecture into a binary instruction format understood by modern web engines. This is achieved through Emscripten, an LLVM-based compiler toolchain that targets Wasm. Recent advancements formalize this pipeline, elevating Emscripten to a Tier 3 supported platform. WebAssembly alone only defines the instruction set; it lacks native awareness of the host operating system. PEP 816 bridges this critical gap by strictly defining CPython’s support for the WebAssembly System Interface (WASI). By locking down specific WASI and WASI SDK versions at the beta phase of each Python release, the core development team provides enterprise engineers with a stable, predictable Application Binary Interface (ABI). This prevents the catastrophic silent failures that historically plagued early browser-based Python experiments.

Memory Management Across the JavaScript Boundary

Executing Python inside a browser necessitates bridging the Wasm linear memory space with the JavaScript engine's garbage-collected heap. Frameworks like Pyodide handle this via complex proxy objects, allowing JavaScript to call Python functions and vice versa. This boundary crossing introduces friction. Passing large datasets—such as a multi-gigabyte pandas DataFrame—by value across the JS-Wasm boundary creates severe performance degradation due to memory duplication. High-performance implementations mitigate this by sharing direct pointers to memory buffers, treating the Wasm memory space as a zero-copy ArrayBuffer in JavaScript. Engineers must architect their data flows to minimize boundary crossings, executing the bulk of the computational heavy lifting entirely within the Wasm sandbox before yielding a final, lightweight result back to the Document Object Model (DOM).

Serverless Redefined: The Economic Impact of Client-Side Compute

Slashing Cloud Infrastructure and Egress Costs

Traditional Python applications rely on a monolithic or microservice backend to process data, returning serialized results to the frontend. This model incurs continuous compute costs and massive cloud egress fees, particularly when visualizing large datasets. WebAssembly-Native Python Execution flips this economic model. By downloading the Python runtime directly to the client, the end-user's hardware absorbs the computational burden. A company serving ten thousand concurrent users running complex financial models no longer needs to provision hundreds of Kubernetes pods. The server's role is reduced to delivering static assets—the compiled Wasm binaries and raw data files—effectively transforming expensive compute workloads into cheap content delivery network (CDN) traffic.

Latency Reduction in Data-Heavy Workloads

Network latency is the silent killer of interactive data applications. Every slider adjustment in a traditional dashboard triggers an HTTP request, a server-side computation, and a response payload, resulting in a minimum latency floor of 50-100 milliseconds. Pushing the Python interpreter to the edge eliminates the network hop entirely.

Consider a quantitative trading firm building an internal risk-analysis dashboard. In a legacy architecture, running a localized Monte Carlo simulation required constant API polling. By deploying the simulation logic via Wasm, the application achieves zero-latency interactivity. The Python code executes at near-native speed directly on the trader's machine, providing instantaneous visual feedback and fundamentally altering the user experience of web-based scientific software.

Enterprise Adoption: Deploying Scientific Computing to the Edge

Jupyter Notebooks Operating Entirely in the Browser

The educational and enterprise data science sectors are already experiencing the shockwaves of this transition. Projects like JupyterLite demonstrate the viability of running a complete interactive computing environment without a single backend kernel. Entire university courses and corporate training platforms now utilize browser-based Python via platforms like Capytale and PyodideU. By eliminating the need to provision isolated Docker containers for every student or analyst, organizations drastically reduce infrastructure complexity. The environment boots instantly, loads dependencies via pre-compiled Wasm wheels, and executes code safely within the user's local context.

Real-Time Machine Learning Inference at the Client Level

Deploying machine learning models traditionally requires robust API endpoints, raising both scalability and privacy concerns. Executing inference directly on the client resolves both. A healthcare application can now load a scikit-learn diagnostic model into the browser, process sensitive patient telemetry data locally, and output predictions without ever transmitting protected health information (PHI) over the network. This architecture intrinsically satisfies stringent data residency and privacy regulations, as the raw data never leaves the user's device.

Security and Sandboxing in the Browser Environment

WASI (WebAssembly System Interface) Capability Models

WebAssembly was engineered with a default-deny security posture. Unlike native CPython, which assumes broad access to the underlying operating system, Python running in Wasm cannot arbitrarily read files, open network sockets, or spawn subprocesses. PEP 816's integration of WASI introduces a capability-based security model. System resources must be explicitly provisioned and injected into the Wasm runtime by the host environment. If an application requires access to a specific virtual directory, the host browser or JavaScript wrapper must explicitly grant a file descriptor for that exact path.

Mitigating Arbitrary Code Execution Risks

Allowing users to execute custom Python scripts historically required complex, heavily monitored sandboxing solutions to prevent Remote Code Execution (RCE) attacks. WebAssembly-Native Python Execution neuters this threat vector. Because the code executes client-side within the browser's heavily fortified Wasm sandbox, a malicious script can only consume the user's own local CPU cycles or memory. It cannot pivot to internal corporate networks, access server-side environment variables, or compromise backend databases. This paradigm shift allows developers to safely expose raw Python REPLs and scripting interfaces to end-users without jeopardizing infrastructure security.

Strategic Roadmap: The 2026-2030 Evolution of Frontend Python

Ecosystem Maturation for pip Packages

pyemscripten platform tag via PEP 783 establishes a standardized mechanism for distributing Wasm-compiled binary wheels via PyPI. Over the next three years, the build pipelines for major open-source projects will natively output Emscripten targets, eliminating the current reliance on specialized distributions like Pyodide's custom package registry.Convergence with WebGPU for Accelerated Processing

While CPU-bound Wasm execution is highly optimized, modern AI and data workloads demand hardware acceleration. The critical frontier for 2026 and beyond is the integration of WebAssembly with WebGPU. Currently, browser-based Python lacks direct access to the client's discrete graphics card. As the WebGPU standard matures and Wasm bindings are finalized, Python applications will be able to dispatch parallelized matrix multiplications and neural network tensor operations directly to the local GPU. This convergence will unlock full-scale, deep learning training and inference directly in the browser, rivaling native desktop performance.

If browser vendors arbitrarily restrict WebAssembly memory allocations or if the Python Packaging Authority (PyPA) fails to standardize cross-compiled wheel distribution across mainstream CI/CD pipelines, I would revise my stance. A fragmented packaging ecosystem would trap Wasm Python as an educational novelty rather than an enterprise-grade deployment target.

pyemscripten wheel ecosystem and the impending WebGPU integrations over the next two release cycles. The era of Python existing exclusively behind a REST API is over; the future of computational software is at the edge.FAQ

How does WebAssembly-native Python handle third-party C-extension libraries like NumPy? Currently, C-extensions must be specifically compiled for the Wasm target using tools like Emscripten. The broader Python ecosystem has made significant strides in pre-compiling popular scientific libraries, though proprietary or niche C-extensions still require manual porting.

What is the performance overhead of running Python in the browser compared to native desktop execution? While CPU-bound tasks in Wasm run at near-native speeds, the Python interpreter itself introduces overhead. Execution is typically slower than native CPython, but for interactive web applications, this difference is often imperceptible to the end user and offset by zero network latency.

Sources

Related

View all →