From Syntax to Systems: The Rise of AI Agent Oversight in Software Engineering

From Syntax to Systems: The Rise of AI Agent Oversight in Software Engineering

In January 2026, Infosys deployed Cognition’s autonomous AI software engineer, Devin, across its global financial services practice to execute complex legacy migrations. This enterprise-scale integration, operating under the compliance mandates of the newly enforceable EU AI Act and the NIST AI Risk Management Framework (AI RMF), signals a permanent industry pivot. Raw code generation is practically a solved problem in 2026, shifting the primary bottleneck from writing syntax to orchestrating the machines that write it. The analysis below details the fundamental transition from traditional coding to AI supervision, focusing on system architecture, security governance, and the new skills required to manage autonomous development pipelines.

Table 1: Traditional SDLC vs. 2026 AI-Augmented SDLC

Deconstructing the Shift from Syntax to Architecture

The Commoditization of Raw Code Generation

The marginal cost of generating standard boilerplate, API integrations, and algorithmic logic has approached zero. Autonomous agents no longer merely autocomplete lines; they provision local environments, execute test suites, read error logs, and iterate on their own output without human intervention. This capability strips away the manual labor of typing syntax, forcing engineering teams to adapt to a reality where output volume is practically infinite, but architectural coherence is not. The immediate second-order effect is the devaluation of memorized language syntax. Developers who previously built careers on specialized knowledge of specific language syntax are finding those skills commoditized by models trained on vast repositories of existing code.

Why Systems Thinking is the New Core Competency

With the mechanics of coding delegated to machines, the human engineer's role elevates entirely to system design. Systems thinking requires understanding how disparate microservices interact, how data flows through a distributed architecture, and where race conditions or cascading failures might occur. When an AI agent generates a functional but inefficient database query, the orchestrator must recognize the downstream impact on server load and memory allocation. The focus shifts from executing a function to understanding how that function impacts the broader ecosystem, and establishing the exact guardrails required before the agent executes it.

Establishing Frameworks for Autonomous Agent Governance

Defining Execution Boundaries for Autonomous Commits

Autonomous agents present a unique operational hazard: they can execute actions that break production environments at machine speed. Governance frameworks now demand strict execution boundaries. Organizations implement zero-trust service meshes where agents operate in sandboxed environments, stripped of direct write access to production databases. Commits generated by AI are routed through deterministic policy engines that verify code against predefined constraints before a human ever reviews it. If an agent attempts to modify a core authentication module, the system automatically flags the commit, requiring cryptographic sign-off from a senior architect.

Security Protocols in Machine-Generated Repositories

Machine-generated repositories introduce novel attack vectors, most notably package hallucination and covert code injection. An agent attempting to solve a complex dependency issue might invent a library that does not exist, creating an opening for attackers to register that phantom package on public registries. To mitigate this, security teams apply the NIST AI Risk Management Framework (AI RMF), specifically leveraging the 'Map' and 'Measure' functions to audit agent behavior. Continuous monitoring tools scan machine-generated output for logic drift and unauthorized API calls, ensuring the agent strictly adheres to the principle of least privilege.

Navigating the New Developer Workflow

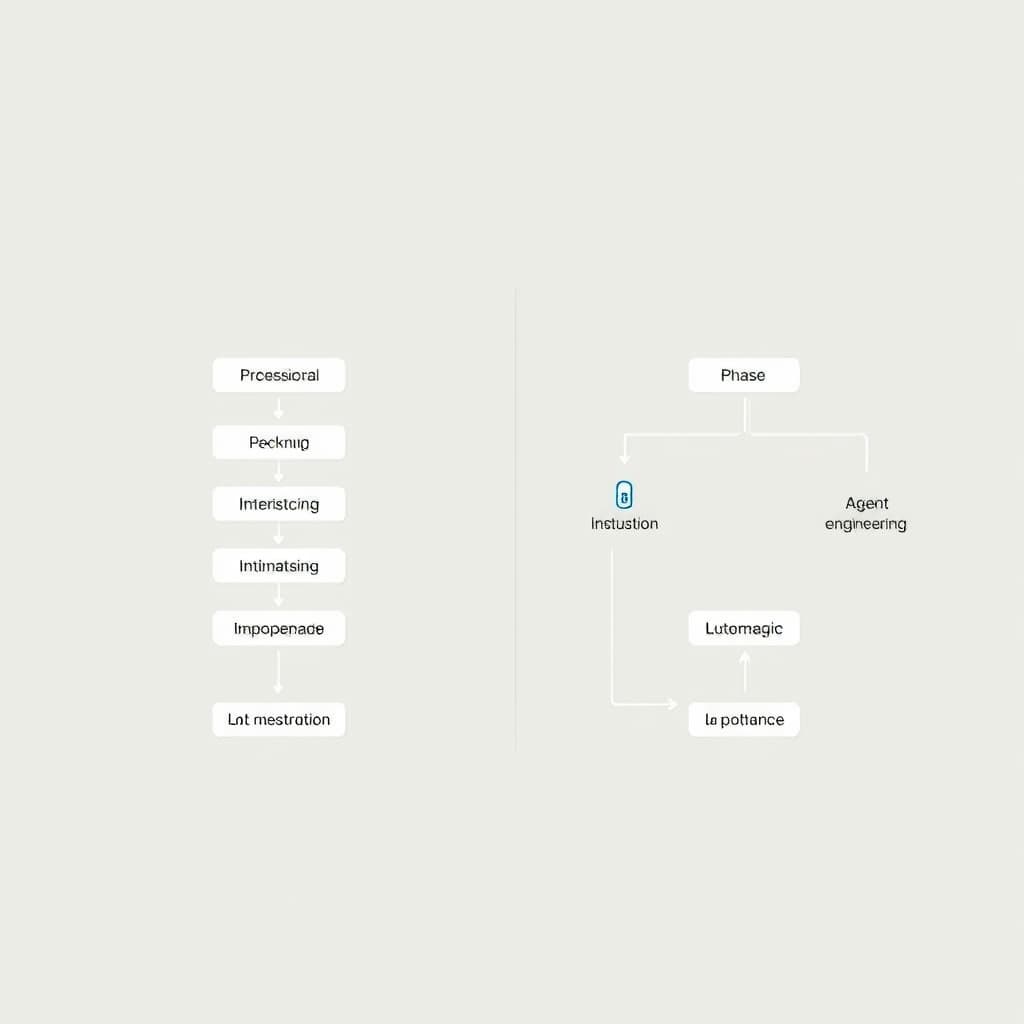

Translating Business Logic into Agent Constraints

The developer workflow has fundamentally transformed from writing code to writing constraints. This process requires engineers to translate ambiguous business requirements into rigid, machine-readable parameters. Instead of writing a sorting algorithm, the engineer defines the acceptable time complexity, the memory limits, and the exact edge cases the agent must handle. The precision of these instructions dictates the success of the autonomous output. Vague constraints lead to sprawling, inefficient codebases, while precise parameters guide the agent toward optimal, secure solutions.

Quality Assurance in the Age of Infinite Output

When an autonomous system can generate tens of thousands of lines of code in minutes, traditional line-by-line code review becomes a physical impossibility. Quality assurance now relies heavily on automated formal verification and behavior-driven testing. Engineers design comprehensive test suites that validate the final state of the application rather than inspecting the specific syntax used to achieve it. This shift requires robust continuous integration pipelines capable of running thousands of simulated user interactions against the AI-generated codebase, flagging any deviations from expected behavior before the code reaches a staging environment.

Table 2: Traditional Code Review vs. Agent Oversight

Related

View all →

The $3 Trillion Refactor: How GenAI Agents Are Finally Cracking the COBOL Code