The Edge Computing Pivot: How Meta’s Manus Agent Redefines Desktop AI

The Edge Computing Pivot: How Meta’s Manus Agent Redefines Desktop AI

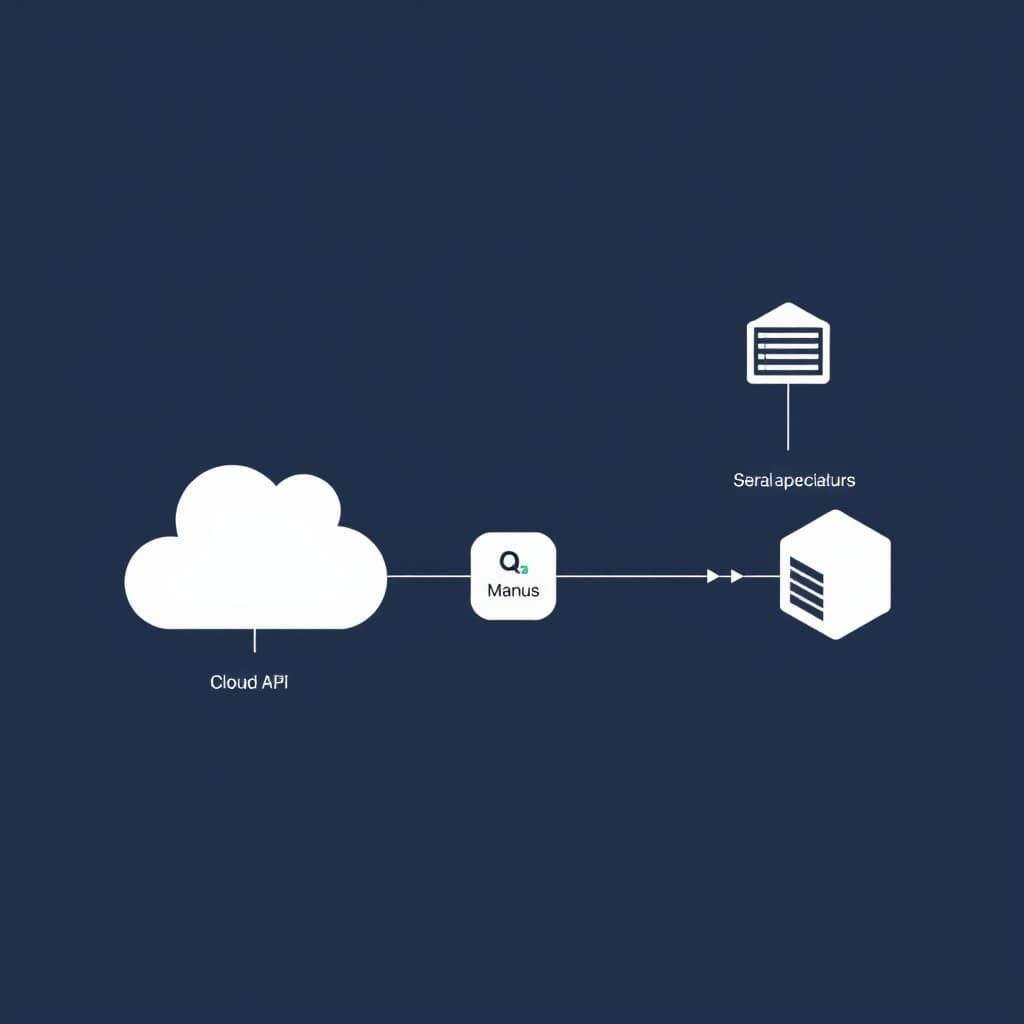

Network latency for cloud-based AI automation currently averages 800 to 1,500 milliseconds per API call. When an agent attempts to orchestrate a multi-step workflow across local desktop applications, that delay compounds, rendering real-time system orchestration impossible. This physical constraint is driving a structural pivot in endpoint computing. Meta’s deployment of the Manus "My Computer" agent shifts execution from remote server farms directly to the local macOS or Windows environment, redefining hardware utility for Desktop AI Agents.

By analyzing the architectural mechanics of localized AI execution, the security trade-offs of system-level agent access, and the competitive dynamics shaping operating systems, engineering and compliance teams can accurately assess the viability of on-device autonomous operators.

The Architecture of Localized Execution: Moving Beyond Cloud Dependency

Decoupling from remote servers for latency reduction

Traditional LLM wrappers operate via a request-response cycle. A user issues a prompt, the payload travels to a cloud endpoint, processes, and returns a text output. When applied to system automation, this model fails. An agent needs continuous state awareness—reading file directories, monitoring UI changes, and executing sequential commands. Manus bypasses the network hop by embedding the execution environment directly on the host machine. The agent translates high-level intents into local system calls, effectively reducing execution latency to the speed of the machine's internal bus.

Hardware utilization: Leveraging Apple Silicon and local GPUs

The feasibility of this localized architecture relies entirely on recent advancements in endpoint hardware. Modern machines equipped with Apple Silicon (M3/M4 series) or dedicated local GPUs possess the neural processing units (NPUs) required to run quantized inference models efficiently. Manus utilizes these idle compute resources to run background inference without crippling the host's primary functions. Instead of paying for expensive cloud GPU cycles, the execution cost is offloaded to the user's existing hardware capital.

Meta's 'My Computer' Integration: System-Level Access on macOS and Windows

Case Study: The Autonomous Accountant Workflow During the deployment of the My Computer feature, Meta highlighted an accounting workflow where hundreds of unsorted PDF invoices required standardized renaming based on their internal contents. A cloud-based agent would require uploading sensitive financial documents to a third-party server—a direct violation of most corporate data policies. The localized Manus agent reads the PDFs via local terminal access, parses the vendor names and dates, and executes a batch rename script entirely on-device in under three minutes. The data never leaves the host machine.

File system permissions and terminal command execution

The core capability of Manus's desktop application is its ability to execute command line instructions (CLI) directly within the host's terminal. This is a severe departure from sandboxed web applications. If a user instructs the agent to "organize my flower shop photos," Manus scans the local directory, identifies image content via local vision models, creates categorized subfolders, and executes the necessary file movement commands to sort the assets.

App-to-app orchestration without API bottlenecks

Because Manus operates at the operating system level, it does not rely on fragile, rate-limited third-party APIs to connect applications. It can interact with local software exactly as a human would—manipulating files in Finder or Explorer, passing data into a local Excel instance, and triggering a local email client. This bypasses the traditional integration bottlenecks that have historically stalled enterprise automation initiatives.

The Privacy Paradox: Data Security in On-Device Autonomous Agents

Mitigating data exfiltration risks in local environments

Providing an AI agent with terminal access creates a massive security vulnerability. A compromised prompt or a malicious file could theoretically instruct the agent to execute a script that exfiltrates local directories. To mitigate this, the architecture requires strict permission boundaries. Manus implements a granular approval matrix where the agent must request explicit "Allow Once" or "Always Allow" permissions before executing destructive commands or accessing external networks. Enforcing these guardrails without introducing severe user friction remains an active engineering challenge.

Navigating enterprise compliance and regulatory scrutiny

The deployment of autonomous agents intersects sharply with data localization laws and corporate compliance frameworks. While local execution solves the problem of transmitting sensitive data to cloud LLMs, it introduces new auditability requirements. IT departments must log every terminal command executed by the agent to maintain SOC 2 and GDPR compliance. Additionally, the acquisition of Manus by Meta is currently under regulatory review by Chinese authorities, highlighting the geopolitical complexities of AI technologies that possess deep system-level access.

The OpenClaw Rivalry: Open-Source vs. Walled Garden Ecosystems

Comparing the MIT-licensed OpenClaw to Meta's proprietary model

The market for Desktop AI Agents has immediately bifurcated into two distinct ideological camps. On one side is Meta's Manus, a proprietary, subscription-based ecosystem tightly integrated with Meta's broader AI ambitions. On the other side is OpenClaw, an MIT-licensed, open-source agent developed by Peter Steinberger that has seen massive adoption among developers.

Developer adoption rates and community-driven extensions

SKILL.md framework. NVIDIA recently launched NemoClaw, an infrastructure layer that adds enterprise-grade privacy and security controls to the OpenClaw stack. This community-driven momentum presents a significant threat to Meta's walled-garden approach.- Hardware Manufacturers (Apple, NVIDIA): Winners. The demand for local compute power to run always-on agents directly drives sales of high-end silicon and NPUs.

- Enterprise IT Teams: Losers (in the short term). They face an immediate nightmare of securing endpoints against autonomous agents that possess terminal access, requiring entirely new auditing frameworks.

- Open-Source Developers: Winners. Frameworks like OpenClaw allow developers to build customized, self-hosted automation pipelines without paying recurring SaaS fees to tech conglomerates.

Forecasting the Desktop AI Market (2026-2030)

The transition from conversational bots to autonomous operators

The era of the conversational chatbot is ending. By 2028, the baseline expectation for AI will shift from generating text to executing multi-step actions. Desktop AI Agents will function as background daemon processes, continuously monitoring local file systems and application states to anticipate user needs. This transition requires a fundamental rewrite of how operating systems handle inter-process communication and permission sandboxing.

Impact on enterprise IT provisioning and endpoint security

As these agents mature, enterprise IT provisioning will pivot from managing software licenses to managing agent permissions. Mobile Device Management (MDM) platforms will need to evolve into Agent Device Management (ADM) platforms, capable of deploying fleet-wide policies that dictate exactly which directories and applications an autonomous operator is allowed to touch. Security perimeters will shrink from the network edge down to the individual application binary.

Meta's aggressive push into on-device automation transforms the personal computer from a passive interface into an active, autonomous operator. The immediate metric to monitor is the enterprise adoption rate versus the open-source alternative OpenClaw, which will dictate the standard for endpoint AI integration over the next 24 months.

FAQ

How does a local desktop AI agent differ from a traditional cloud-based LLM? Local agents execute computational tasks directly on the host machine's hardware, granting them system-level access to manipulate files and applications without transmitting sensitive operational data to external servers.

What are the primary endpoint security implications of granting an AI agent terminal access? Providing an autonomous agent with terminal capabilities introduces significant attack surfaces, requiring strict sandboxing, robust permission protocols, and continuous monitoring to prevent unauthorized system modifications or privilege escalation.

Sources

Related

View all →

The $3 Trillion Refactor: How GenAI Agents Are Finally Cracking the COBOL Code