The $3 Trillion Refactor: How GenAI Agents Are Finally Cracking the COBOL Code

The "Greybeard Crisis"—the demographic cliff where the last generation of COBOL experts retires—has long been framed as a labor shortage. This diagnosis is fundamentally incorrect. The threat to the global financial system, which still processes an estimated $3 trillion daily through mainframes, is not a lack of typists; it is a lack of archaeological understanding. For decades, the primary barrier to modernizing legacy systems was not the difficulty of writing Java or Go, but the impossibility of deciphering undocumented, spaghetti-logic COBOL explicitly designed to save memory in 1980 rather than be readable in 2025.

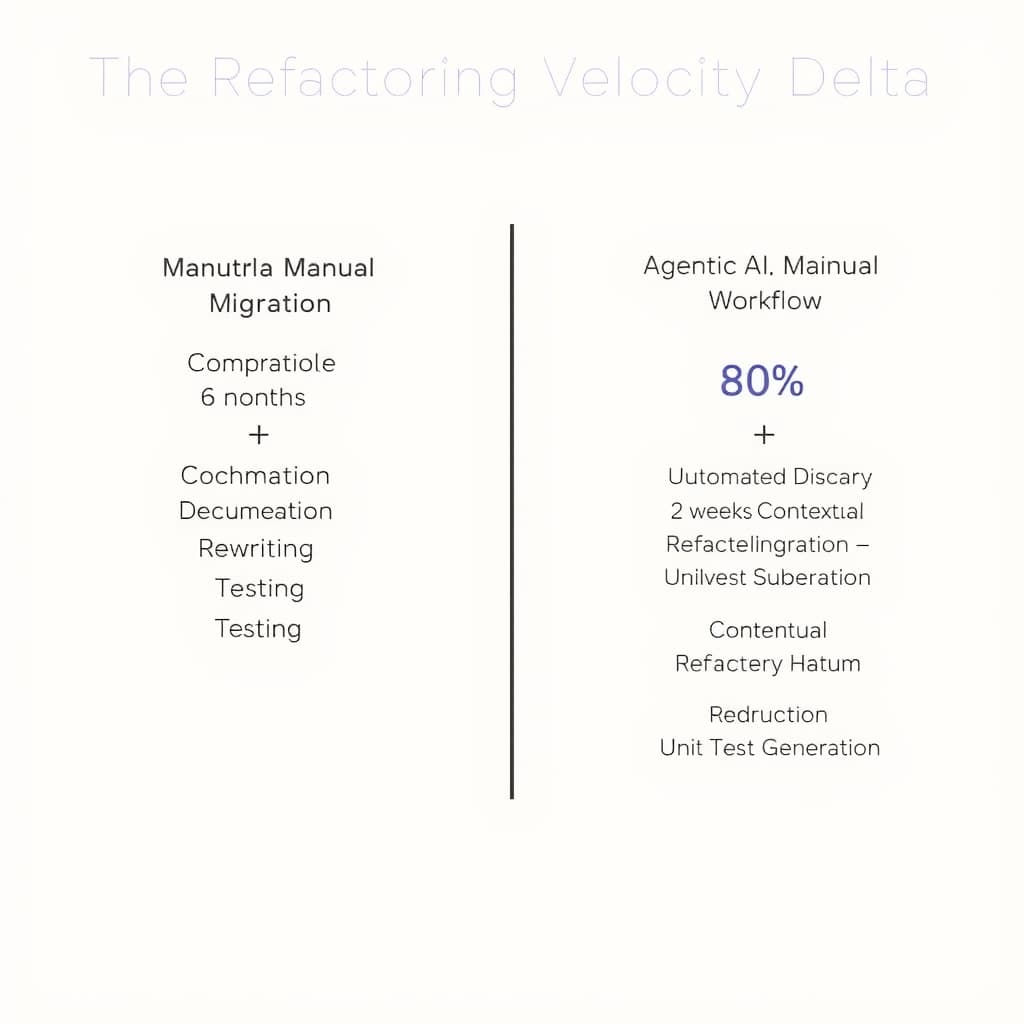

We are currently witnessing a structural break in this dynamic. The introduction of agentic AI workflows—exemplified by tools like Anthropic’s Claude Code and IBM’s watsonx—has shifted the modernization paradigm from manual migration to automated refactoring. By moving beyond simple syntax translation to semantic reasoning, these agents are effectively allowing CIOs to pay down forty years of technical debt in fiscal quarters rather than decades. The bottleneck is no longer human comprehension; it is the speed of verification.

From Regex to Reasoning: How Agentic AI Deciphers Mainframe Logic

Historically, automated migration tools relied on transpilers—glorified regular expression engines that mapped COBOL commands to Java classes one-to-one. The result was "JOBOL": Java code that looked and performed exactly like COBOL, preserving the technical debt in a new syntax.

Agentic AI fundamentally alters this by utilizing massive context windows (often exceeding 200,000 tokens) to ingest entire program clusters, including Copybooks and JCL (Job Control Language). Instead of translating line-by-line, agents like Claude Code perform three distinct architectural tasks:

- Logic Extraction: The agent identifies the business rule (e.g., "Apply a 2% surcharge if the account balance exceeds $50,000 and the region is APAC") separate from the control flow logic used to manage mainframe memory.

- Pattern Recognition: It recognizes legacy anti-patterns—such as using

GO TOstatements to jump across paragraphs—and refactors them into modern object-oriented or functional structures. - Dependency Mapping: It traces variable mutations across thousands of lines of code to ensure that a change in a subroutine does not break a global variable defined in a separate Copybook.

The Mechanics of Isolation

The primary breakthrough is the decoupling of logic from the legacy environment. In a traditional rewrite, a developer spends 70% of their time figuring out what the code does. Agents invert this. By ingesting the codebase, the agent generates a natural language specification of the current system's behavior. The human architect then validates this specification, and the agent generates idiomatic code (e.g., Python or Java Spring Boot) based on the spec, not the original COBOL syntax.

Big Blue's Moat Under Siege: IBM's Position in the Agentic Era

IBM has successfully defended its mainframe monopoly for decades through the "risk of change." If moving off the mainframe carries a 10% chance of catastrophic failure, CIOs will happily pay rising MIPS (Million Instructions Per Second) costs to stay put. Generative AI threatens to lower that risk threshold below the cost of maintenance.

However, IBM is not standing still. Their strategy with watsonx Code Assistant for Z is a defensive pivot: convince enterprises to modernize on the platform rather than off it.

The Hybrid Cloud Defense

IBM’s counter-argument is latency and data gravity. Even if an agent can rewrite the code in Java, moving the petabytes of data residing in VSAM files or Db2 for z/OS to the cloud is a massive undertaking. IBM’s pitch is "Modernize in Place": use AI to refactor COBOL into Java, but run that Java on the IBM Z hardware (using zIIP processors to lower costs).

This creates a split in the market. Tier 1 banks with deep pockets may use IBM’s tools to sanitize their code while keeping the hardware. Mid-market financial institutions, however, are increasingly using vendor-agnostic agents to break free entirely, targeting AWS or Azure as the final destination.

The Hallucination Risk in Critical Financial Ledgers

The analytical precision of a compiler is binary: code either compiles or it doesn't. Large Language Models (LLMs) are probabilistic. In a social media app, a hallucination is a nuisance; in a ledger processing SWIFT transactions, it is a solvency event.

Deterministic Verification Layers

To mitigate this, successful implementations are not relying on the AI to be perfect. Instead, they are deploying Parallel Run Architectures.

- The "Twin" Model: The legacy COBOL system and the new AI-generated system run simultaneously for a period (often 6-12 months).

- Differential Testing: Every transaction input is fed to both systems. The outputs are compared bit-for-bit.

- Divergence Analysis: When outputs differ, agents are used to diagnose why. Often, the "error" is actually a bug in the original COBOL that the AI fixed, or a floating-point precision difference between the mainframe and x86 architectures.

We are seeing a move toward "Human-in-the-Loop" for auditing, not coding. The developer's role shifts from writing syntax to reviewing the test cases generated by the AI. If the AI writes the code and the test, the risk of circular logic errors increases. Therefore, a best practice is emerging: use one model (e.g., Claude 3.7) to write the code and a different model (e.g., GPT-4o) to write the tests (Adversarial AI validation).

The Post-Legacy Era: Outlook for Enterprise Infrastructure (2026-2030)

As these tools mature, the "Technical Debt" line item on enterprise balance sheets—often representing 20-30% of IT budget—will undergo a rapid repricing. By 2028, maintaining a COBOL monolith will be viewed not as a necessary evil, but as a governance failure.

We expect the emergence of the AI Mainframe Architect. This role does not write JCL. Instead, they orchestrate swarms of agents to decompose monolithic applications into microservices. They manage the "context budget" of the models and oversee the integration of generated code into CI/CD pipelines.

Map of Incentives

- Winners: Cloud Hyperscalers (AWS, Azure, GCP) gain the most as workloads finally become portable. System Integrators who pivot to AI-driven migration factories will see high-margin short-term revenue, though their "body shop" model of billing hours for manual migration is dead.

- Losers: Niche COBOL Staffing Agencies will see their premium rates collapse. Legacy Hardware Vendors face an existential threat if they cannot justify the hardware premium once the software lock-in evaporates.

- Mixed: CIOs gain agility but trade "known" legacy risks for "unknown" AI security risks (e.g., prompt injection in code generation).

What Would Change My Mind?

My analysis assumes that the hallucination rate for complex logic chains can be contained via deterministic testing. If we see a major "Black Swan" event—such as a top-tier bank suffering a ledger corruption due to a subtle AI-introduced logic error that passes standard unit tests—the industry will recoil. The regulatory backlash would freeze AI-driven migrations for years, returning us to the slow, manual "strangler fig" pattern of modernization.

The End of "Too Big to Rewrite"

The era of the "too big to rewrite" system is effectively over. The economics of modernization have inverted; the risk of staying on legacy code now outweighs the risk of automated refactoring. While hallucinations remain a critical implementation detail, they are a solvable engineering constraint, not a fundamental blocker. The next battleground for CIOs will not be preserving legacy code, but securing the AI pipelines that replace it.

FAQ

Can AI agents completely replace human COBOL programmers? Not immediately. While agents excel at syntax translation and logic extraction, human experts are still required for architectural decision-making, validation of business logic, and ensuring the new system meets compliance standards. The role shifts from "programmer" to "auditor."

How does this impact IBM's mainframe business model? It presents a dual-edged sword. While it threatens high-margin legacy support contracts, it also accelerates the modernization IBM advocates for (Hybrid Cloud). IBM is actively pivoting to sell the AI tools that facilitate this transition (watsonx), aiming to capture the value of the migration even if the compute workload eventually moves or diversifies.

Sources

- Anthropic – Claude Code and Agentic Capabilities

- IBM – watsonx Code Assistant for Z

- Reuters – IBM Launches Generative AI Tool to Modernize Mainframe Code

- Open Mainframe Project – COBOL Statistics and Ecosystem Data